Many website operators in Europe are at a loss: Is Google Analytics illegal? This is what recent decisions and statements by European Data Protection Authorities suggest, and some of them even say so directly. On closer inspection the situation is less black and white. Google Analytics can, in our view, be used in compliance with the GDPR. We will explain how and why. The decisions reflect a trend among data protection authorities towards a fundamentalistic and absolutistic view of data protection, trying to push the GDPR into a corner where many say it was not intended to be.

Following a series of complaints filed by the non-profit organization noyb.eu in 2020 against 101 EEA websites using Google Analytics or Facebook Connect, EEA data protection authorities have started issuing rulings against the websites, declaring their use of Google Analytics as noncompliant with the GDPR. The Austrian Data Protection Authority was first on December 22, 2021, with the French Data Protection Authority CNIL following on February 10, 2022. Since the European Data Protection Board (EDPB) "coordinated" the reaction to the complaints by noyb.eu supposedly with a model response, more such "copy & paste" decisions are to be expected (see also Kuan Hon's collection of links on the topic and her paper summarizing enforcement activities in the broader context of Schrems II).

Note that we have not been involved in any of the proceedings discussed here or other similar proceedings related to Google Analytics. This blog reflects the personal opinion of its author and not necessarily the view of any client (or even Google). We have been asked by publishers seeking independent advice on what they should do about their use of Google Analytics following the decisions mentioned. We analyzed the situation and came up with specific proposals. With this blog, we want to share our views and recommendations publicly; they and the related TIA are, however, not legal advice, provided for informational purposes only, and to be used at your own risk.

Before we do a deep dive, it is necessary to understand the bigger picture. It has become obvious that noyb.eu and many EEA data protection authorities want to force EEA website operators to switch to EEA-based solutions and in any event stop using Google Analytics regardless of how it is implemented. In our view, however, the discussion concerning the use of US-owned service providers appears to be, first above all, a political one. While there may be reasons for pushing in that direction, a discussion about the legality of services such as Google Analytics or of other US-based providers should be based on facts and law. We have the impression that this is not always the case, and even data protection authorities are today engaging in what appears to be a mere "power game" between some parties in Europe and in the US; in private discussions, representatives from data protection authorities also admit that they are simply clueless about how to reasonably deal with Schrems II.

The Google Analytics decisions seem to fall in this category. When we talk to our peers, many are worried that the principles set out in these decisions (and similar decisions, such as in the Google Fonts matter) will also be applied in other cases. The attempt to redefine the term "personal data" to no longer require identifiability is one example (we discuss it below). While we understand why some data protection authorities are pushing in that direction, we believe that de lege lata and de lege ferenda should be clearly distinguished. Carey Lening recently described the current trend as a dangerous game that regulators are playing on the Internet. The ones suffering today are the many European businesses and other organizations that want to properly implement state-of-the-art online techniques, but even with a lot of goodwill cannot understand the attitude and position of many EEA data protection authorities. They fear finding themselves between a rock and a hard place and hope that they can remain under the radar until the topic of international transfers is dealt with more reasonably again. We also have the impression that there are more important issues to be dealt with in data protection than the often only theoretical risk of US intelligence authorities accessing the data of offerings such as Google Analytics. The clear and present risk of ransomware and other cyberattacks is only one example.

The Austrian Case

The Austrian decision was the first and the most detailed one, which is why we will focus on it. The decision relies on the manner in which Google Analytics has been implemented in the case at hand. This is important because Google Analytics can be implemented in several different ways, which has an impact on its assessment under the GDPR (and the Swiss Data Protection Act, which follows the same concepts concerning international transfer). In the Austrian case an implementation was chosen as a target by noyb.eu that did not use various features available for data protection compliance. Accordingly, the fact that the authority found the implementation non-compliant does not mean that other implementations of Google Analytics are non-compliant, too. Also, key findings and arguments of the authority are in our view incorrect or at least questionable. We will discuss them further below.

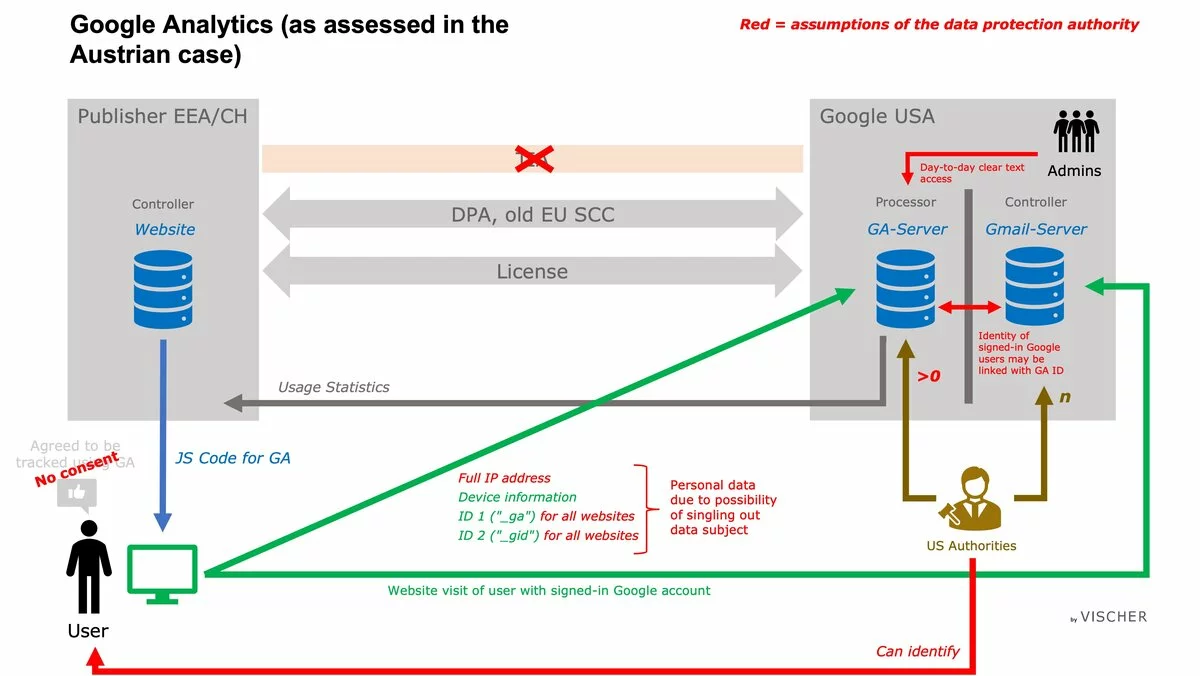

In the Austrian case, the authority according to its decision found or assumed the following (the references refer to the full-text decision in German):

- Google Analytics was used without the user having consented to it (p. 39);

- The website operator was the controller, and Google the processor (p. 32 et seq.);

- The website operator had a contract directly with Google LLC, i.e. a US organization (thus, Chapter V of the GDPR was directly applicable to the transfer) (C.6);

- The transfer was safeguarded by the old EU Standard Contractual Clauses (C.6, p. 35);

- The feature for IP anonymization had not been implemented correctly and, therefore, did not work (C.7, C.9);

- The transfer apparently occurred without a prior assessment of the risk of prohibited foreign lawful access pursuant to Section 702 FIA and EO 12.333 (as required per the ECJ decision of July 16, 2020 – Schrems II); this is not expressly stated in the decision, but the authority finds that the website publisher "continued" to use Google Analytics even after the Schrems II decision, which first introduced the obligation to perform such transfer impact assessments (p. 32);

- Every time the website was accessed this caused it to send Google two unique IDs, which the authority apparently believed permitted Google to track users across different websites; one of its arguments as to why a unique ID is identifiable information was that Google's intention is to use Google Analytics to collect information about website users from as many websites as possible, which argument only makes sense if one assumes that the website IDs collected by Google Analytics can be connected across all websites, broadening the uniqueness of the user's "digital footprint", as the authority pointed out (p. 29);

- Google Signals (which we will discuss in detail below) was not activated by the website publisher (C.4);

- At least one user of the website (i.e. the complainant) was logged into their Google account while using the website at issue (C.8);

- If a user is logged into their Google account while using the website, Google is able link the Google Analytics data obtained from the user with data of the user's Google account (C.10), and the authority apparently believed that Google links such data with Google Analytics data once the user activates the option "Ads Personalisation" in his or her Google account – such option would otherwise not make any sense in the authority's view (p. 31, 39);

- The data sent to Google is not considered pseudonymized, because individuals can be singled-out (p. 38);

- Google has possession, custody and control of Google Analytics data in clear text, because it is technically able to access the data in that form (p. 38);

- Google's Transparency Report indicated that 0-499 requests were received in any relevant period (C.6, p. 35);

- Access requests by US intelligence authorities did occur (p. 32, 35);

- US intelligence authorities are able to track at least some users based on their unique IDs or IP addresses collected through Google Analytics when surfing on the Internet, based on pre-existing information they may have, and it is more than just a theoretical possibility that they are identified (p. 31 et seq., 39).

Furthermore, the Recommendations 01/2020 on measures that supplement transfer tools to ensure compliance with the EU level of protection of personal data" of the EDPB were apparently considered as de facto binding by the authority. They were applied to the case without validation (see, for example, p. 37 et seq.).

The following chart illustrates the above assumptions and findings of the authority:

How Google Analytics should be used instead

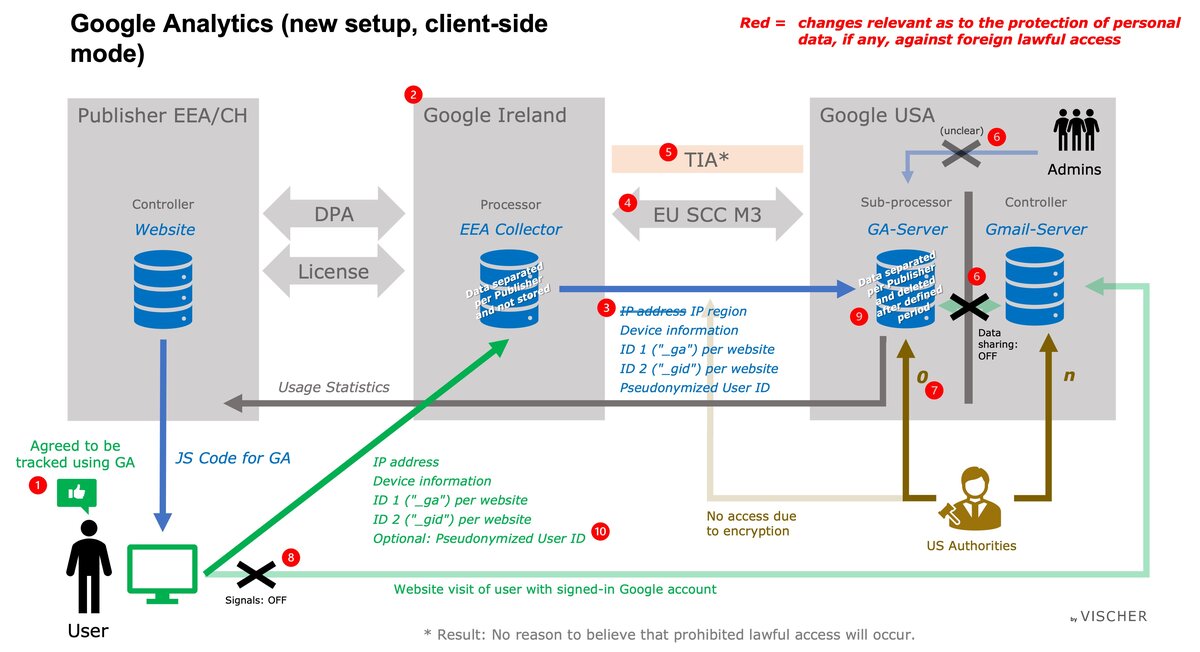

Today, it is possible to implement Google Analytics in a different manner that provides better compliance with the GDPR (and Swiss DPA). We will discuss only the "client-side" implementation of Google Analytics, which consists of including JavaScript code on each relevant page of a website, which will then set certain (first-party) cookies to generate two unique IDs, which are then transferred to Google for generating usage statistics for the website. Google Analytics can also be implemented using a server-side mode, which is more complicated, but which will result in less exposure of the user towards Google, as there is no direct communication between the user and Google (the information is collected by the publisher's server and then forwarded to Google for analysis). The majority of websites use the client-side mode.

In the setup that in our view can be considered compliant with the GDPR and Swiss DPA, nine elements of relevance for privacy compliance are different from the ones in the Austrian case. They are as follows:

- Each user is asked for its consent before Google Analytics is activated during website visits. In most cases, this is already done in the EU to comply with national ePrivacy rules, and even in Switzerland, asking for prior consent is becoming standard practice. Provided that users are informed accordingly, the consent can cover the transfer of personal data to the US including the possibility of such data being subject to lawful access under US law (this information is necessary as consent has to be "explicit"). This will permit relying on the exemption of Art. 49(1)(a) GDPR in connection with Chapter V of the GDPR (the same applies under the Swiss DPA). If properly implemented, this measure alone should be sufficient to resolve the issues found in the Austrian case and permit the usage of Google Analytics. If a publisher does not wish to rely on consent or fears that the data protection authority will not accept this solution, the further measures should be considered.

- The publisher should not enter into (or continue to be a party to) a contract with Google LLC, but instead contract with Google Ireland Limited. This is already standard practice for EMEA customers, but some old contracts may still be in use and have to be updated. As a consequence, the data transfer initiated by the JavaScript code on the publisher's website to Google will no longer be subject to Chapter V of the GDPR; the transfer will occur within the EEA. Only then, in a second step, the data will be transferred to the US, but in this setup, Google Ireland Limited will be considered the exporter, and not the publisher. This also means that it will be for the Irish Data Protection Authority to assess the legality of such export; accordingly, the setup will, to a certain extent, take the publisher out of the "line of fire". Of course, the publisher – in its role as the controller – remains responsible for the compliance of the processing activity, but this is only an indirect responsibility on the basis of Art. 32 GDPR, and no longer under Chapter V of the GDPR. That said, we expect noyb.eu, as part of its general mission against US-owned service providers, to raise complaints against Google Ireland Limited for these transfers.

- The publisher should use the IP Anonymization feature of Google Analytics, which will cause Google Ireland Limited to strip the last part from the user's IP address within Europe before the user's data is passed along to Google LLC in the US. This feature is turned on by including some code on the website at issue.

- Google Ireland Limited has in place with Google LLC Module 3 of the new EU Standard Contractual Clauses (SCC). This is confirmed in their Google Ads Data Processing Terms (Section 10.3(b)(i)(A)).

- Google Ireland Limited has also undertaken a Transfer Impact Assessment ("TIA") for the transfer of any personal data to Google LLC, as per Clause 14 of the SCC and, by entering into the SCC, has warranted that it has "no reason to believe" that any prohibited lawful access will occur in the present case. Unfortunately, this TIA is not yet publicly available, though, and has to be requested from Google by each publisher. This is possible based on Section 7.5.2(a) of the Google Ads Data Processing Terms. In addition to that, we have ourselves independently from Google carried out a TIA for the transfer of data between Google Ireland Limited to Google LLC. It comes to the conclusion that the risk of a prohibited lawful access in the next five years is 3.38 percent, which means that there is a 90% chance that a lawful access of this kind will occur at least once only every 335 years. We believe that under these conditions, Google Ireland Limited indeed has no reason to believe that any prohibited lawful access will occur, thus satisfying the "Schrems II" and EDPB conditions for the data to be transferred to Google LLC, even if such data were considered personal data (which we believe is not the case for reasons discussed below).

- The publisher should make sure that the "Data sharing" option of Google Analytics is turned off. This can be done within the administration console of the service. It is not entirely clear, though, what the effect of this setting is. What is clear is that with the data sharing setting turned off, Google may, as a processor, only access the publisher's Google Analytics data for "maintaining and protecting" Google Analytics; Google will not be using or have access to such data as a controller for its own or third party purposes. The setting apparently also affects access to the data by Google staff. According to Google, with the option turned on, Google staff will have access, including for support purposes; if it is turned off, it appears that this is not the case, since Google warns users that it "might not be able to help resolve technical issues". This indicates that Google staff will have not have access to the data in the ordinary course of business but rather only under exceptional circumstances, for example if the entire service is at risk ("maintaining and protecting"). This appears to hardly ever happen, at least based on the status dashboard of Google Analytics: In 2021, there were only two cases, and it is not known whether Google staff made use of its ability to access customer data in clear text.We have not yet been able to find out to what extent Google restricts staff access to clear text Google Analytics data. Other providers are more explicit on this point, such as Microsoft with its "Lockbox" service, where it contractually undertakes that it (i.e. its staff) cannot access the content of the customer data without the customer's explicit approval in each case. Why is this relevant? It determines the probability that Google LLC as the importer is found to have "possession, custody or control" ("p/c/c") over the data at issue in clear-text. Google only has to produce data over which it has p/c/c. At this point, it is important to understand that the term "control" has a different meaning under the GDPR and US law. Under US law, even a processor (in terms of the GDPR) can be found to have "control" over data and, subsequently, be required to produce it to the authorities. Under the GDPR, "control" refers to controlling how data is processed, whereas under US law, "control" refers to the right to obtain the data as such ("legal" control) or actual access in the ordinary course of business even if there is no legal ownership or control ("day-to-day" control). In the present case, Google LLC as a mere processor can take the position that it has no legal ownership or control of the data. But can it argue that it also has no day-to-day control over the data? We believe that this may well be possible. First, a distinction has to be made between Google LLC's systems having access to the data in clear text, on the one hand, and Google LLC's staff (i.e. officers or agents), on the other hand. If the staff has no access, i.e. if data is within Google's systems, but nobody at Google can access it in clear text without reprogramming the system, then there is arguably no day-to-day control. Second, it is well accepted under US law that the mere technical possibility of gaining access is not sufficient to establish day-to-day control, in particular where such technical access is not possible in the manner the systems have been set up or at least is not part of routine business activity. See, for example, the work of Hemmings, Srinivasan and Swire for a discussion of p/c/c and underlying US case law. In the present case, any Google Analytics data is encrypted at rest, which means that only those system processes or staff members that have the necessary access privileges will be able to read it in clear text and such access will be granted only for as long and to the extent necessary (principle of least privilege, which Google applies). The fact that Google's systems themselves can access and decrypt data does, in our understanding, not result in day-to-day control if the systems are programmed to run Google Analytics without providing Google staff day-to-day access to their data unless data sharing is turned on. Whether Google staff may in extraordinary situations nevertheless be able to gain access to the data and its decryption key (e.g., through a break glass account), will likely be irrelevant against this background, as long as such access is not part of routine business activity. While we could not have all our factual assumptions confirmed so far, they are based on how hyperscalers operate; manual intervention is typically limited to a minimum. To be on the safe side, we have in our TIA assumed a lower than usual probability that Google LLC will succeed in showing that it has no p/c/c over customer data in clear text. If and when more information becomes available, a higher probability can be applied in the TIA. Note that Google LLC is required under the SCC to challenge any government request on the basis of these and other arguments available. While none of these arguments may in any event provide full protection against unwanted lawful access, in combination they reduce the probability of it being successful. This is reflected in our TIA.

- Google has stated publicly that in the last 15 years since the inception of Google Analytics it has never received any request from the US government under the relevant provisions (i.e. Section 702 FISA) for the production of the data at issue and further stated that "we don't expect to receive one because such a demand would be unlikely to fall within the narrow scope of the relevant law". Note that Executive Order 12.333, on which basis signal intelligence can be performed on telecommunications backbones outside the US and which is the second US law provision relied upon by the ECJ in the Schrems II decision, is not relevant in the present case as all transfers between Google Ireland Limited and Google LLC are encrypted and not accessible in clear text. The same is true for Section 702 FISA surveillance requests to US providers on US territory other than Google LLC.

- Google LLC is acting as a sub-processor of Google Ireland Limited when processing data for Google Analytics. As such, it is not permitted to use data for its own purposes as a controller. This would require permission from the publisher, which does not exist (unless data sharing is turned on). Accordingly, Appendix 1 to the Google Ads Data Processing Terms only refers to the processing of personal data for the purposes of its (i) services as a processor and (ii) technical support. Any actual joinder with personal data collected by Google LLC as a controller (e.g., when providing its Gmail service to consumers or permitting consumers to be logged in with their Google account while surfing) is not permitted and does, according to Google, not happen unless Google LLC acts as a controller (namely if "Data sharing" is turned on). The scenario discussed in the Austrian case, where a user activated "Ads Personalisation" and could, thus, cause Google to link their Google account identifier with Google Analytics data of a particular website is incorrect and appears to be the result of a misunderstanding of how the service works. It is not up to the user whether Google creates some kind of link between the Google Analytics data and the Google account identifier. In fact, there is no such link, and it remains under the publisher's control whether Google, as a controller, tracks signed-in users on the publisher's website. Here, some technical details are relevant: The implementation of Google Analytics on a website enables Google as a controller to receive the information that a specific Google account user has visited a particular website, only if (i) the user has activated the "Web & App Activity" setting in their Google account; (ii) the user has chosen to include activity from sites that use Google services (i.e. the publisher's website); (iii) the user has activated the Ads Personalisation setting, (iv) the user is logged into their Google account in the same browser, while visiting that website, and (v) the publisher has activated "Signals" in its Google Analytics implementation. All these requirements, in particular the activation of Signals, must be met cumulatively at the time of the user visiting the website. The main purpose of Signals is to allow publishers to, among other things, obtain aggregated demographics and interest insights into the traffic on their website. That said, even with Signals turned on, Google LLC remains a (sub-) processor with regard to the data collected using Google Analytics; the publisher controls any sharing of Google Analytics data through the "Data sharing" option, as discussed above. Hence, in the default configuration, Google Analytics data remains within Google Analytics and is only linked to the Google Analytics identifiers supplied by the publisher for each user; it is never linked to any Google account identifier. Even if Signals is enabled at the time of a website visit, data related to the visit (but not the Google Analytics identifier or other data collected by Google Analytics) is stored in the separate databases maintained by Google for its own purposes (i.e., the Google account). Based on the information available, we understand that this occurs in parallel, but is otherwise unrelated, i.e. the Google Analytics identifiers and the Google account identifier are not linked or joined. It appears that these facts were not considered in the Austrian case, but they would have been key in our view: First, the Austrian data protection authority determined that Signals was not used. Accordingly, Google did not track the website visits of signed-in Google account users. It follows that even technically, it could not link the two sets of data, because there would be no corresponding data set on the part of Google; the activation of Ads Personalisation is irrelevant. This is where the Austrian authority erred: It failed to consider Signals. Second, even where such tracking by Google (as a controller) occurred on the basis of Signals being turned on, Google claims that the identifiers used for Google Analytics and Google accounts are not linked. We can leave it open whether it would theoretically be possible to create such a link for two other reasons: First, we recommend turning Signals and Data sharing off. Second, when determining whether a data subject is identifiable, account should be taken only of those means "reasonably likely to be used" (Recital 26 of the GDPR). In the present context (linking the two datasets would represent a breach of contract, the systems are not programmed to perform such linking and Google also does not need it) it is in our view not reasonably likely that such linking occurs. The fact that a particular entity has control over two separate and separated datasets does, as such, not infer that they are linked. Under the GDPR (as well as the Swiss DPA) they have to be considered separately with regard to the question as to whether they represent personal data (see discussion below).

- When set up in this manner, the data collected for Google Analytics is segregated by website and publisher and cannot be linked across websites (unless the publisher itself adds a unique ID of its own, see next point). As the Google Analytics IDs are obtained through first-party cookies, they are limited to the website for which they have been obtained. The same user will, therefore, have different IDs when surfing on different websites, even if they belong to the same publisher. This will further prevent any linking between the different datasets. In the Austrian case, the data protection authority appears to have implicitly assumed that the IDs are the same across all websites visited by the user, thus broadening their digital footprint and increasing the likelihood of identification. Furthermore, Google LLC will delete the tracking data collected in connection with a particular website visit after 2 to 50 months, depending on the publisher's setting and the Google Analytics product used. We advise setting the retention period as short as feasible.

- Google Analytics allows the publisher to track logged in users across various websites by permitting them to include in the data sent to Google Analytics a proprietary User ID (it is not permitted, however, to generate such an ID based on device fingerprinting or the like). If this is done, then the ID should not permit identification of the user (e.g., do not use the e-mail-address in clear text).

The following chart illustrates the above:

When implemented as described above, we believe that Google Analytics can be operated in compliance with the GDPR (and the Swiss DPA). This is true even if one were to assume that the data collected qualifies as personal data (as the Austrian data protection authority and CNIL have; we explain below why we do not believe that this is correct).

Seven steps to take

Hence, a publisher should take the following seven steps when implementing Google Analytics in a client-side mode:

- Ensure that tracking will happen only after the data subject has (i) been informed that it their data will be transferred to the importer in the US, where it may be subject to local lawful access and that they can at any time withdraw their consent and (ii) consented to such usage and transfer and not withdrawn it. It should inform Inform that tracking IDs, where applicable IP addresses and device information is shared with Google in the US. If properly implemented, this measure is in principle sufficient for permitting the use of Google Analytics. The further steps are intended as further protection beyond consent.

- Ensure that the contract for using Google Analytics (including the Data Processing Agreement) is concluded with Google Ireland Limited, and not Google LLC. In fact, all new Google Analytics contracts in EMEA (both the free and paid version) are concluded with Google Ireland Limited. Some older contracts may have to be updated.

- Ensure that IP Anonymization is activated and implemented properly.

- Ensure that the Data sharing option is kept off within Google Analytics. The Data sharing option is deactivated by default.

- Ensure that the Signals option is not activated within Google Analytics.

- If you are using proprietary User IDs, make sure that they do not permit user identification (e.g., no e-mail addresses in clear text).

- Review the TIA covering the data transfer between Google Ireland Limited and Google LLC and decide, as the controller, whether you agree with Google Ireland Limited that there is no reason to believe that any personal data collected from your users by using Google Analytics will be subject to lawful access by US intelligence authorities.

Dissecting the Austrian Decision

We assume that large parts of the Austrian decision have been prepared by the EDPB to ensure that the various data protection authorities across the EEA will follow a unified approach with the various complaints of noyb.eu. Hence, it makes sense to analyze its legal reasoning a bit closer. In our view, several conclusions and interpretations made by the authority are in our view not convincing and partially based on wrong assumptions.

To begin with, we believe that the authority's analysis of whether there is personal data is not correct for the following reasons:

- The authority takes the position that the mere ability to single out users of a website is sufficient to qualify usage data as personal data (p. 28). It does so by arguing that Recital 26 of the GDPR refers to singling out as a possible means of identification. In our view, however, it is not permitted to conclude that, conversely, every case of singling out automatically leads to identification. We have shown already a few years ago that data that permits the singling out of an individual does, as such, not lead to personal data and have described another test for determining whether personal data exists.

The authority's reference to the "online identifier" in the definition of personal data pursuant to Art. 4 GDPR has the same catch: While identification may be possible by using an online identifier, the use of an online identifier does not necessarily result in the identifiability of a data subject. Identifiability is, however, a precondition for the existence of personal data. In all cases, the definition of personal data still requires that the data subject becomes identifiable, which the data protection authority has in our view not shown.

Further, if a website is used by both human beings and automated agents (which happens frequently), they will both generate the same kind of online identifier, and it may not be possible for the publisher to distinguish them, let alone find out which particular human being has been tracked. But can data generated by robots be personal data? Certainly not. This is one of the reasons why it is essential that the data itself provides a link to a particular human being, even though the data protection authority is correct in its conclusion that this does not have to be the person's "face" or name.

Notably, the ECJ so far left open the question as to whether cookies (and their identifiers, as in the present case) qualify as personal data (Case C-673/17, N 71). - The Austrian authority is at least consistent with its own arguments when claiming that it is not possible to pseudonymize identifiers because the data subjects could still be singled out (p. 38). While this is a logical consequence of the foregoing theory, it is also further proof that it is not supported by the GDPR. If the theory were correct, then even a fully encrypted identifier where the key for decryption has been lost would still amount to personal data, because such an encrypted identifier would still be unique as opposed to any other identifier encrypted in the same manner. However, there would nobody on earth who could determine to whom such identifier relates.

The flaw in the authority's theory is that it is mixing up the possibility to identify a particular user with the possibility to individually target or count it. The former is relevant with regard to the definition of personal data; the latter is not, under current law. The authority's attempt to redefine personal data to include data that does not identify, but singles out individuals, is probably the most problematic part of the entire decision, because it would have the most far reaching consequences, if upheld. We see repeated attempts of various EEA data protection authorities to move the GDPR in that direction by continuously (but in our view wrongly) alleging that it singling out individuals equals to their identification. It is, of course, possible to change the definition of "personal data" to better protect individuals in cases of "anonymous" targeting (which is what this is all about), but so far, this has not happened. It should be done in a democratic manner by parliament, not by data protection authorities. - The authority argues that it is even clearer that the online identifier is personal data because it can be combined with other data collected during the use of Google Analytics (p. 29). This argument is again incorrect in our view, because the additional data at issue increases only quantity, not quality of the data in terms of identification of a data subject. In other words: Adding more of the same does not increase the identifiability in the present case. It still needs to be shown that the data collected can be matched with other data that permits identification, and such other data does not exist because the identifiers are either volatile, too imprecise or do not exist outside the context of that particular website.

Besides, as shown above, the authority has wrongly assumed that the linking of a user's Google account identifier with their Google Analytics identifier is under the user's sole control. This is in general incorrect, and it was also incorrect in the case at hand: The authority itself determined that Signals had not been activated. Since Signals was not used, the visits of signed-in Google users were not tracked by Google as a controller. Moreover, even where such tracking is activated, there is no linking between Google Analytics identifiers and Google account identifiers according to Google, at least if data sharing is turned off, and there is also no reason for it to happen. - The authority further argues that it is the purpose of at least the free version of Google Analytics to be implemented on as many websites as possible, implying that this will allow Google to track users across all websites (p. 29). This is not the case as explained above.

- The authority claims by reference to the ECJ decisions C-434/16 (Nowak) and C-582/14 (Breyer) that it is sufficient that an identification of the data subject is possible by just anybody ("irgendjemand", p. 29 et seq.). This is not what the ECJ has ruled. To the contrary, the ECJ has made it clear that if the data needed to identify a data subject is held by two different persons, it has to be determined how probable it is that the two will combine their data. Specifically, the ECJ stated in C-582/14, N 45 et seq.: "However, it must be determined whether the possibility to combine a dynamic IP address with the additional data held by the internet service provider constitutes a means likely reasonably to be used to identify the data subject. / Thus, as the Advocate General stated essentially in point 68 of his Opinion, that would not be the case if the identification of the data subject was prohibited by law or practically impossible on account of the fact that it requires a disproportionate effort in terms of time, cost and man-power, so that the risk of identification appears in reality to be insignificant." This "relative" approach in determining whether personal data exists is also applied under the Swiss DPA (BGE 136 II 508). It means that the same piece of information can, in the hands of one person, qualify as personal data, whereas in the hands of another person it will not.

- What is correct, though, is that the question of the identifiability of the data subject cannot be limited to the controller (here: the publisher). It has to be assessed for any person that has access to the data at issue, i.e. including any processor (here: Google LLC). But we do not agree with the decision that the mere technical possibility to identify matters (p. 30 and p. 31). The ECJ as well as the Swiss Federal Court made it clear that there must also be an intention to make use of the means for identification. As explained in N 45 of C-582/14, it has to be assessed whether there are legal restrictions ("prohibited by law") or if the efforts necessary to identify a data subject outweigh the benefits of doing so ("a disproportionate effort"), which tests may both lead to the conclusion that the "risks of identification appear in reality insignificant". If so, there is no personal data.

- To that end, the Austrian authority concludes that Google is able to link the data collected through Google Analytics with the identity of a user provided such user is, at the same time, logged into its Google account (p. 30 et seq.). We have explained already above that this is not correct.

- The Austrian authority also assesses whether US intelligence authorities are able to link the data collected through Google Analytics with the identity of a user. It argues that it "cannot exclude" that these authorities use IP addresses and online identifiers available to them from other sources and can use them to identify users within the Google Analytics dataset (p. 31 et seq.). It concludes by reference to the ECJ decision C-311/18 that this is not just a theoretical risk. It appears that the authority takes an improper shortcut. The standard is not whether identification cannot be excluded, but rather whether it is reasonably possible – and this has not been shown in the case. The ECJ decision cited does not deal with Google Analytics, and provides no evidence of the lawful access requests relevant in this case. In its decision, the authority neither establishes whether US intelligence authorities are able and permitted to access Google Analytics data, nor whether they actually do so, nor whether they can identify users within such datasets. Rather, the authority seems to conclude that because Google, as a company, does receive lawful access requests, such lawful access requests must also apply to Google Analytics. We find this problematic:

First, Google has made it clear that there have never been any requests from US intelligence authorities for the gathering the Google Analytics data; hence, such access so far is a theoretical risk. We don't know whether it also made this statement during the proceedings before the Austrian authority; it appears that this was not the case; if so this was a mistake.

Second, even if US intelligence authorities would already have in their hands IP addresses or online identifiers of users of certain targets, the data collected by Google Analytics would be useless for tracking them: The online identifiers used by Google Analytics are different (they vary from website to website), and IP addresses are usually assigned dynamically, i.e. they also change constantly. It is not possible – without having access to the internet service provider database – to track a user based on the IP address (see above, C-582/14, N 45 et seq.). Notably, all these facts were not even considered by the authority. What remains is an approximation by device fingerprinting, as suggested by noyb.eu. While device fingerprinting can result in a pseudo-unique identifier and may be useful as a second-best choice where cookies do not work, it is a very unreliable means of tracking one particular individual, let alone across the Internet. For instance, in a study from 2019, only about 33% of the browser fingerprints collected on iPhones from a particular audience were unique. The numbers would be lower if more websites were included and there was a larger audience. The study also showed that these fingerprints have a tendency to change often (nearly 10% of the devices changed their fingerprints multiple times within 24 hours). The study used 31 different characteristics to create the fingerprint, which is much more than the information collected by Google Analytics. Hence, even when using device fingerprinting, it is highly unlikely that US intelligence authorities could identify a particular data subject, even if they were to use it – for which there is no indication. In fact, there are much easier and much more precise ways for an intelligence authority to track a particular individual both on the Internet and in real life using its mobile phone. They are also used in Europe.

Third, it is questionable whether Section 702 FISA permits the US intelligence authorities to order the disclosure of the Google Analytics data (see our TIA). Hence, the risk of identification of a data subject based on Google Analytics data appears to be of merely theoretical nature.

If one were to accept that the use of Google Analytics results in the transfer of personal data to the US, one needs to answer the question whether such transfer is in compliance with Chapter V of the GDPR. Here, we find the following considerations of the authority problematic:

- The authority argues that no consent had been obtained. While this is correct on the part of the publisher of the website, the user did apparently consent towards Google LLC to have its data from visiting the publisher's website used by Google as a controller. This is relevant because according to the decision (p. 31), the data collected by Google as a controller was a key factor for the authority to conclude that the Google Analytics data is considered personal data. This is based on the assumption that it was at least technically possible to link the two datasets, i.e. the Google Analytics data and the Google account data. If that were correct (which it is likely not in the present case), and if such data resulting in the identification of the website user had already been transferred to Google LLC with the user's consent (due to the user's settings within his or her user account), then the authority should have considered Art. 49(1)(a) DSGVO as grounds for justifying the transfer. It did not.

- The authority considers it "obvious" that Google LLC qualifies as an Electronic Communications Service Provider ("ECSP") and is, thus, subject to Section 702 FISA. The authority concludes that because of that, Google LLC is required to provide the US authorities with "personal data" (p. 35). It does not further differentiate. Based on the fact that Google LLC states on its transparency website that it, for all entire past reporting periods, had "0-499" requests, the authority concludes that it "regularly has such requests" (ibid.). Again, no further considerations are made. The authority neither evaluates whether the data processed for Google Analytics is indeed subject to downstream surveillance obligations pursuant Section 702 FISA, nor whether Google LLC really had requests for such data. In the former case there are reasons to conclude that this is not the case (see our TIA), and with regard to the latter, Google has said that there have been no such requests since the inception of Google Analytics (see above).

- The authority's conclusions do not come as a surprise, though. When reading the decision, it appears that Google did not really provide the authority the necessary information and arguments as to why it had no reason to believe that prohibited lawful access requests could or did occur. Rather, Google seems to have pointed the authority to its various data security measures, which are indeed not relevant for the questions at issue. There is no indication in the decision that Google explained why it believed that Google Analytics data is, in fact, not subject to downstream surveillance requests as per Section 702 FISA or why it believed that the Google Analytics data at issue was not to be considered in its possession, custody or control given the various contractual, technical and organizational measures. For instance, in the authority's opinion, the mere technical ability of an ECSP to access personal data in clear text leads to the obligation of the ECSP to produce such data in clear-text upon request (p. 38). This is not correct. The data at issue must also be considered under the ESCP's "control" (which in turn requires day-to-day or legal control, which requirement may not be met, as described above) and it must be the content of communications between non-US persons (which it arguably is not).

- We do note that Google's whitepaper "Safeguards for international transfers with Google's advertising and analytics products" focuses on traditional data security, rather than on measures that prevent foreign lawful access. Section 702 FISA and EO 12.333 are discussed only on 1.5 pages of the 20 page document. Likewise, we see many TIAs which either only generally discuss lawful access laws or only discuss traditional data security. Instead, a proper TIA should analyze the probability of a prohibited lawful access occurring, taking into account all circumstances of the case. Many TIAs, unfortunately, do not address this question. In such cases it does not come as a surprise that data protection authorities conclude that there is a relevant risk of foreign lawful access.

All in all, the above thoughts show that it is far from clear that "the use of Google Analytics is illegal" in Europe, as Max Schrems, honorary chair of noyb.eu has claimed. The Austrian case demonstrates, however, that the legal analysis very much depends on the specific facts of the case and on the parties involved in the proceedings presenting the authority with the relevant facts and arguments. It will be interesting to see whether the decisions of the data protection authorities will be challenged in court and how courts, potentially the ECJ, will deal with the above questions. Until the situation has been clarified, we believe website publishers should take the steps described above to minimize their risks, unless they want to move away from US-owned service providers. From a purely legal point of view, the decisions rendered only apply to the cases that have been considered by the authorities at hand. According to our knowledge, so far no fines have been imposed on publishers. Yet, in view of the current situation concerning the topic of international transfers of personal data, we would not be surprised to see more decisions such as the Austrian one even where publishers follow the steps described above.